Artificial Intelligence (AI) is evolving rapidly, and one of the most exciting frontiers in this field is multimodal AI. This technology allows models to process and interpret information from different modalities, such as text, images, and audio. Two of the leading contenders in the multimodal AI space are LLaMA 3.2 90B Vision and GPT-4. Both models have shown tremendous potential in understanding and generating responses across various data formats, but how do they compare?

This article will examine both models, exploring their strengths and weaknesses and where each one excels in real-world applications.

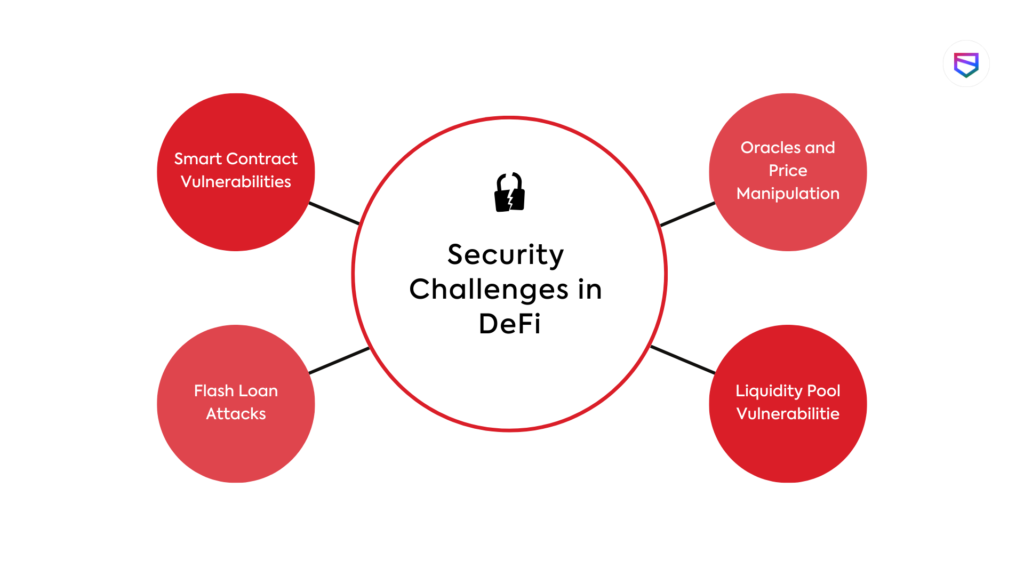

What Is Multimodal AI?

Multimodal AI refers to systems capable of simultaneously processing and analyzing multiple types of data—like text, images, and sound. This ability is crucial for AI to understand context and provide richer, more accurate responses. For example, in a medical diagnosis, the AI might process both patient records (text) and X-rays (images) to give a comprehensive evaluation.

Multimodal AI can be found in many fields such as autonomous driving, robotics, and content creation, making it an indispensable tool in modern technology.

Overview of LLaMA 3.2 90B Vision

LLaMA 3.2 90B Vision is the latest iteration of the LLaMA series, designed specifically to handle complex multimodal tasks. With a whopping 90 billion parameters, this model is fine-tuned to specialize in both language and vision, making it highly effective in tasks that require image recognition and understanding.

One of its key features is its ability to process high-resolution images and perform tasks like object detection, scene recognition, and even image captioning with high accuracy. LLaMA 3.2 stands out due to its specialization in visual data, making it a go-to choice for AI projects that need heavy lifting in image processing.

Advantages:

Limitations:

Overview of GPT-4

GPT-4, on the other hand, is a more generalist model. Known for its robust language generation abilities, GPT-4 can now also handle visual data as part of its multimodal functionality. While not initially designed with vision as a primary focus, its integration of visual processing modules allows it to interpret images, understand charts, and perform tasks like image description.

GPT-4’s strength lies in its contextual understanding of language, paired with its newfound ability to interpret visuals, which makes it highly versatile. It may not be as specialized in vision tasks as LLaMA 3.2, but it is a powerful tool when combining text and image inputs.

Advantages:

Best-in-class text generation and understanding

Versatile across multiple domains, including multimodal tasks

Limitations:

Technological Foundations: LLaMA 3.2 vs. GPT-4

The foundation of both models lies in their neural architectures, which allow them to process data at scale.

Comparison Chart: LLaMA 3.2 90B Vision vs. GPT-4

FeatureLLaMA 3.2 90B VisionGPT-4

Model Size90 billion parametersOver 170 billion parameters (specific count varies)

Core FocusVision-centric (image analysis and understanding)Language-centric with multimodal (text + image) support

ArchitectureTransformer-based with specialization in vision tasksTransformer-based with multimodal extensions

Multimodal CapabilitiesStrong in vision + text, especially high-resolution imagesVersatile in text + image, more balanced integration

Vision Task PerformanceExcellent for tasks like object detection, image captioningGood, but not as specialized in visual analysis

Language Task PerformanceCompetent, but not as advanced as GPT-4Superior in language understanding and generation

Image RecognitionHigh accuracy in object and scene recognitionCapable, but less specialized

Image GenerationCan describe and analyze images but not generate new imagesDescribes, interprets, and can suggest visual content

Text GenerationStrong, but secondary to vision tasksBest-in-class for generating and understanding text

Training Data FocusPrimarily trained on large-scale image datasets with languageBalanced training on text and images

Real-World ApplicationsHealthcare imaging, autonomous driving, security, roboticsContent creation, customer support, education, coding

StrengthsSuperior visual understanding high accuracy in vision tasksVersatility across text, image, and multimodal tasks

WeaknessesWeaker in language tasks compared to GPT-4Less specialized in detailed image analysis

Open SourceSome versions are open-source (LLaMA 1 was open-source)Closed-source (proprietary model by OpenAI)

Use CasesBest for vision-heavy applications requiring precise image analysisIdeal for general AI, customer service, content generation, and multimodal tasks

LLaMA 3.2 90B Vision boasts an architecture optimized for large-scale vision tasks. Its neural network is designed to handle image inputs efficiently and understand complex visual structures.

GPT-4, in contrast, is built on a transformer architecture with a strong focus on text, though it now integrates modules to handle visual input. In terms of parameter count, it is larger than LLaMA 3.2 and has been tuned for more generalized tasks.

Vision Capabilities of LLaMA 3.2 90B

LLaMA 3.2 shines when it comes to vision-related tasks. Its ability to handle large images with high precision makes it ideal for industries requiring fine-tuned image recognition, such as healthcare or autonomous vehicles.

It can perform:

Thanks to its vision-centric design, LLaMA 3.2 excels in domains where precision and detailed visual understanding are paramount.

Vision Capabilities of GPT-4

Although not built primarily for vision tasks, GPT-4’s multimodal capabilities allow it to understand and interpret images. Its visual understanding is more about contextualizing images with text rather than deep technical visual analysis.

For example, it can:

Generate captions for images

Interpret basic visual data like charts

Combine text and images to provide holistic answers

While competent, GPT-4’s visual performance isn’t as advanced as LLaMA 3.2’s in highly technical fields like medical imaging or detailed object detection.

Language Processing Abilities of LLaMA 3.2

LLaMA 3.2 is not just a vision specialist; it also performs well in natural language processing. Though GPT-4 outshines it in this domain, LLaMA 3.2 can hold its own when it comes to:

However, its main strength still lies in vision-based tasks.

Language Processing Abilities of GPT-4

GPT-4 dominates when it comes to text. Its ability to generate coherent, contextually relevant responses is unparalleled. Whether it’s complex reasoning, storytelling, or answering highly technical questions, GPT-4 has proven itself a master of language.

Combined with its visual processing abilities, GPT-4 can offer a comprehensive understanding of multimodal inputs, integrating text and images in ways that LLaMA 3.2 may struggle with.

Multimodal Understanding: Key Differentiators

The key difference between the two models lies in how they handle multimodal data.

LLaMA 3.2 90B Vision specializes in integrating images with text, excelling in tasks that require deep visual analysis alongside language processing.

GPT-4, while versatile, leans more toward language but can still manage multimodal tasks effectively.

In real-world applications, LLaMA 3.2 might be better suited for industries heavily reliant on vision (e.g., autonomous driving), while GPT-4’s strengths lie in areas requiring a balance of language and visual comprehension, like content creation or customer service.

Training Data and Methodologies

LLaMA 3.2 and GPT-4 were trained on vast datasets, but their focus areas differed:

LLaMA 3.2 was trained with a significant emphasis on visual data alongside language, allowing it to excel in vision-heavy tasks.

GPT-4, conversely, was trained on a more balanced mix of text and images, prioritizing language while also learning to handle visual inputs.

Both models used advanced machine learning techniques like reinforcement learning from human feedback (RLHF) to fine-tune their responses and ensure accuracy.

When it comes to performance, both models have their strengths:

LLaMA 3.2 90B Vision performs exceptionally well in vision-related tasks like object detection, segmentation, and image captioning.

GPT-4 outperforms LLaMA in text generation, creative writing, and answering complex queries that involve both text and images.

In benchmark tests for language tasks, GPT-4 has consistently higher accuracy, but LLaMA 3.2 scores better in image-related tasks.

Use Cases and Applications

LLaMA 3.2 90B Vision is ideal for fields like medical imaging, security, and autonomous systems that require advanced visual analysis.

GPT-4 finds its strength in customer support, content generation, and applications that blend both text and visuals, like educational tools.

Conclusion

In the battle of LLaMA 3.2 90B Vision vs. GPT-4, both models excel in different areas. LLaMA 3.2 is a powerhouse in vision-based tasks, while GPT-4 remains the champion in language and multimodal integration. Depending on the needs of your project—whether it’s high-precision image analysis or comprehensive text and image understanding—one model may be a better fit than the other.

FAQs

What is the main difference between LLaMA 3.2 and GPT-4? LLaMA 3.2 excels in visual tasks, while GPT-4 is stronger in text and multimodal applications.

Which AI is better for vision-based tasks? LLaMA 3.2 90B Vision is better suited for detailed image recognition and analysis.

How do these models handle multimodal inputs? Both models can process text and images, but LLaMA focuses more on vision, while GPT-4 balances both modalities.

Are LLaMA 3.2 and GPT-4 open-source? LLaMA has some open-source versions, but GPT-4 is a proprietary model.

Which model is more suitable for general AI applications? GPT-4 is more versatile and suitable for a broader range of general AI tasks.