Microsoft’s Vision for Copilot: A New Era of AI Companionship

Have you ever thought how and when Microsoft Copilot will be a true assistant and provide you a new kind of support? With the latest updates to Microsoft Copilot this vision is getting closer to reality. Announced on October 1, 2024, the refreshed Copilot vision aims to revolutionize our interaction with technology by focusing on how it feels to users, rather than just the technical details.

Microsoft Copilot: Your AI CompanionNew Upcoming Features to CopilotCopilot Vision and Think DeeperRegional AvailabilityNew Enhancements in Azure OpenAI ServicesGPT-4o-Realtime-Preview with Audio and Speech CapabilitiesPerformance That SpeaksApplications of GPT-4o-Realtime-PreviewWhat’s Next with GPT-4o-Realtime API for Audio?A Commitment to Responsible AI

Microsoft’s Copilot is designed to be a calm, helpful, and supportive presence in your life. It goes beyond merely solving problems; it’s there to support, teach, and help you. Copilot will eventually adapt to your preferences and needs, providing support and helping you navigate life’s complexities. And no, it is not sci-fi-AI-in-making, but just the next step on the road making Copilot more and more useful to us humans. One of the keys to these new features is multi-modality, that is becoming also available via Azure OpenAI Services.

In the future Copilot will be our UI to AI. As voice and natural language UI becomes common, we will have less need to build complex UIs so enable interactions with backend and other systems. Instead of using a traditional UI, we will be just talking or typing to the Copilot, and we will get the results. Perhaps we need to get the data analyzed? Instead of building a PowerBI report, in the future, we ask Copilot to do that. Does that sound like it would be too far in the future? Did you notice that Excel got Python support? You can use Copilot in Excel today to analyze your data, and it generates and runs Python code that is connected to the data. Why would we not be able to do that on BizChat (in the near future, I hope)? The talking to AI might also sound a bit futuristic, but with latest upcoming features to Copilot – it will be there soon. Not in Europe, but in a few other regions first. But it won’t be just a text to speech, but a voice of Copilot that can mimic and understand feelings in the voice.

Why is analyzing data a great example of this? We have various needs and some of those are ad hoc, despite being somewhat complex. And we may not need the results as a report, but instead we need to know or see what it is all about. And often the data is in backend systems, which brings me to connecting Copilot to systems beyond Microsoft 365. We can already start to pilot with extensions and plugins that extend Copilot’s capabilities. Instead of doing a full analysis, we just might want to know the total of sales for the current day or week. Information that can be fetched from the backend, something we could just ask from our Copilot. What’s already in the works is how we can do actions with external systems. Instead of opening a web page or app and logging into a system, we do all this via our digital assistant. This is why this is extremely interesting and important to keep in mind.

This doesn’t happen tomorrow, but as time goes on – it is happening sooner than we think. We can already extend Copilot and build plugins & custom copilot agents using various ways – such as Copilot Studio, Power Automate and pro-code with Teams Toolkit and Teams AI Studio. I would recommend starting to experiment with these as soon as possible to make the organization future proof.

My thoughts and visions align with Microsoft’s Copilot vision, and so it is easy to be very excited about the opportunities and possibilities that are ahead of us on this journey. I was recently taking part in a great meeting with fellow The Digital Neighborhood MVPs at our HQ in Amsterdam. Ideas and thoughts about the future were discussed from various perspectives, and it was one of my colleague-MVPs who brought up the data analysis example, pointing out how code interpretation will be a real game-changer there. It is already there, on various implementation levels. We have also seen how GPT-4o with voice works – if you haven’t seen those videos, do ask Copilot about them (or just search with Bing or Google). The future is interesting, for sure!

New Upcoming Features to Copilot

The latest updates to Copilot will include several new and enhanced features:

Copilot Voice: This feature allows you to connect with your AI companion using voice commands (multi-modality). With four voice options to choose from, it’s the most intuitive way to brainstorm, ask questions, or simply vent. Copilot doesn’t have feelings, so it is a perfect companion for venting things out – a safe place to do that. Don’t confuse Copilot’s capability to mimic feelings in the voice, to actual feelings and emotions. Copilot is a tool and algorithm in the core, and not a AGI (Artificial General Intelligence).

Copilot Daily: Start your morning with a summary of news and weather, all read in your favorite Copilot Voice. This feature helps you manage the daily barrage of information with ease. It is quite cool to see this happening, as it has been present in so many sci-fi-movies and also on future visions.

Copilot in Microsoft Edge: Copilot is now integrated into the Microsoft Edge browser, quickly helping answer questions, summarize page content, translate text, or rewrite sentences. The cool? The multimodality, as Copilot will also understand images on web pages.

Copilot Labs: This platform allows users to test experimental features like Copilot Vision and Think Deeper, providing feedback to shape future updates.

Copilot Vision and Think Deeper

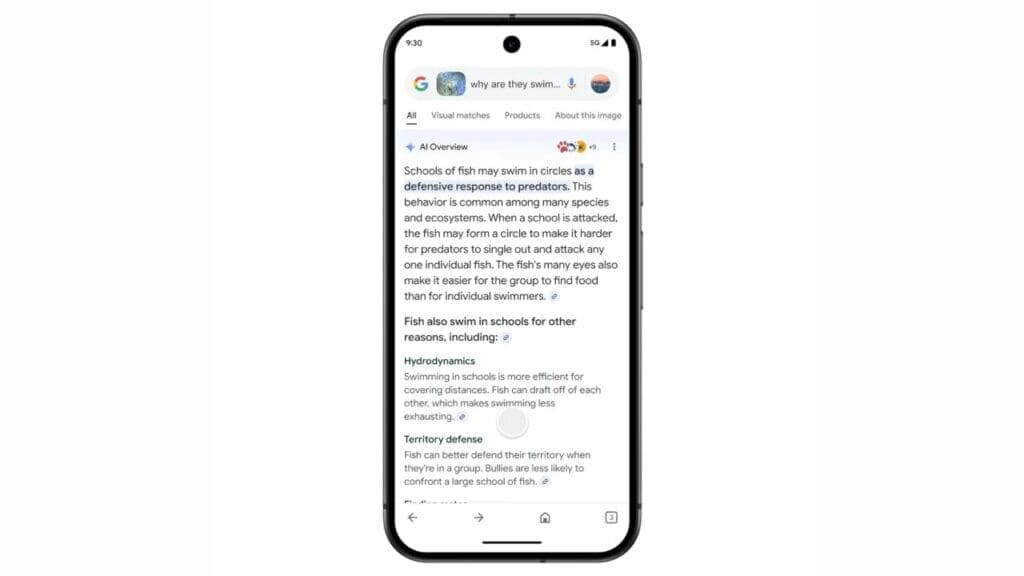

Copilot Vision: This innovative feature enables Copilot to see what you see and interact with web pages in real time, offering suggestions and answering questions without disrupting your workflow.

For Microsoft, safety and security are their top priorities:

Copilot Vision sessions are entirely opt-in and ephemeral. None of the content Copilot Vision engages with is stored or used for training — the moment you end your session, data is permanently discarded.

The experience won’t work on all websites because we’ve taken important steps to put boundaries on the types of websites Copilot Vision can engage. We’re starting with a limited list of popular websites to help ensure it’s a safe experience for everyone.

Copilot Vision won’t work on paywalled and sensitive content for this preview. We’ve created it with both users’ and creators’ interests top of mind.

There is no specific processing of the content of a website you are browsing, nor any AI training. Copilot Vision simply reads and interprets the images and text it sees on the page for the first time along with you.

Before we launch broadly, we’ll continue to take feedback on all the above from early users in Copilot Labs, refine our safety measures and keep privacy and responsibility at the center of everything we do. Let us know what you think!

Think Deeper: Designed to reason through complex questions, this feature provides detailed, step-by-step answers for challenging queries, helping you make informed decisions. This is an early Copilot Skill that’s still undergoing development, so Microsoft placed it in experimental Copilot Labs to test and get feedback.

As exciting as these features are, it’s important to note their regional rollout plans.

Copilot Voice is initially available in English in Australia, Canada, New Zealand, the United Kingdom, and the United States. Expansion to more regions and languages will follow soon.

Copilot Daily is rolling out first in the United States and the United Kingdom, with additional countries to be added shortly.

Copilot Vision will be accessible through Copilot Labs to a limited number of Copilot Pro subscribers in the United States.

Think Deeper starts its rollout this week to a limited number of Copilot Pro users in Australia, Canada, New Zealand, the United Kingdom, and the United States.

Unfortunately, for those of us in Europe, we will need to wait a bit longer for these exciting new features. Microsoft is working diligently to ensure that personalization in Copilot adheres to the Microsoft Privacy Statement, and options for offering personalization to users in the European Economic Area and the United Kingdom are still being finalized.

Read more about these updates and Microsoft’s Copilot vision from their blog post.

As Copilot is using Azure OpenAI Services (AOAI) in the background (users don’t see these, they just use Copilot) the advancements in AOAI make it possible to bring those features to Copilot. Microsoft just announced several updates to Azure OpenAI Services, Below, read about the latest advancements and the potential opportunity.

GPT-4o-Realtime-Preview with Audio and Speech Capabilities

The introduction of GPT-4o-Realtime-Preview marks a significant milestone: advanced voice capabilities to the Microsoft Azure OpenAI Service, expanding GPT-4o’s multimodal offerings. The integration of language generation with voice interaction allows developers to craft more natural and conversational AI experiences. From creating virtual assistants to powering real-time customer support, the possibilities are vast and promising. And the abovementioned Copilot Voice is a good example of how to utilize this capability.

The GPT-4o-Realtime API supports audio input and output, enabling real-time, natural voice-based interactions. This multimodal capability empowers developers to build innovative voice applications with ease, providing faster and more engaging responses that minimize the robotic tone often associated with AI-generated speech. Moreover, the API supports a wide range of languages, facilitating natural, multilingual conversations for global-facing applications.

This also means that it won’t be necessary to use Azure Speech to Text (STT) and Text to Speech (TTS) services to create a voice interface to your AI. Adding the voice will be way easier now – but it doesn’t mean we would not need STT and TTS services anymore. With those Speech services we can utilize custom voice and photorealistic avatars – and a lot more. But for the Copilot and AI apps – having these built-in inside GPT-4o will be a big advantage on both speed and easiness. We won’t be able to notice the ”AI delay” we experience when doing the typical speech to text – to LLM and back – and text to speech roundtrip.

This will be available for standard and global standard deployment in East US2 and Sweden Central for approved customers. Regional availability ensures that users across different geographical locations can access and benefit from the advanced capabilities of GPT-4o-Realtime API for Audio.

Performance That Speaks

Early adopters of the GPT-4o-Realtime API for Audio have reported remarkable results, including significantly faster responses and more natural conversations. These improvements are particularly beneficial for applications such as voice-based chatbots, virtual assistants, and real-time translators, enhancing user engagement and satisfaction.

Applications of GPT-4o-Realtime-Preview

The versatility of GPT-4o-Realtime-Preview spans across various industries, transforming how businesses operate and how users interact with technology:

Customer Service: Voice-based chatbots and virtual assistants can handle customer inquiries more naturally and efficiently, reducing wait times and improving overall satisfaction.

Content Creation: Media producers can revolutionize their workflows by leveraging speech generation for use in video games, podcasts, and film studios.

Real-Time Translation: Industries such as healthcare and legal services can benefit from real-time audio translation, breaking down language barriers and fostering better communication in critical contexts.

Azure remains steadfast in its commitment to responsible AI, with safety and privacy as default priorities. The Realtime API utilizes multiple layers of safety measures, including automated monitoring and human review, to prevent misuse. Additionally, the Realtime API has undergone rigorous evaluations guided by our commitments to Responsible AI, ensuring a secure and responsible AI experience for our users.

What’s Next with GPT-4o-Realtime API for Audio?

Microsoft will continue to innovate and expand the capabilities of the GPT-4o-Realtime API for Audio, and they are excited to see how we, partners, developers and businesses will leverage these new technologies to create voice-driven applications. Preferably ones that push the boundaries of what’s possible. Starting today, you can explore these new capabilities in the Azure OpenAI Studio, experiment with them in the Early Access Playground, or integrate the real-time API in public preview into your applications. Be sure to review our documentation for the latest updates, dive into the available use cases, and start building with GPT-4o-Realtime API for Audio to bring your business to the next level of AI innovation.

Read more about these updates to Azure OpenAI Service from here and here and here.

Microsoft is committed to ensuring that AI enriches people’s lives and strengthens our bonds with others, while supporting our unique and complex humanity. Copilot is not just another tool; it’s a companion designed to be by your side, always supporting you in ways that matter most.

As we embark on this exciting journey, Microsoft remains dedicated to accountability, respect, and compassion for users and society. This is a journey we promise to take together, and we couldn’t be more thrilled to start it with you.

Stay tuned for more updates and get ready to experience a new era of AI companionship with Copilot.

Published by

I work, blog and speak about Future Work : AI, Microsoft 365, Copilot, Microsoft Mesh, Metaverse, and other services & platforms in the cloud connecting digital and physical and people together.

I have about 30 years of experience in IT business on multiple industries, domains, and roles.

View all posts by Vesa Nopanen